ICL-Gait: A Real-World Multi-View Pathological Gait Dataset

Dataset | Supplementary Code

Introduction

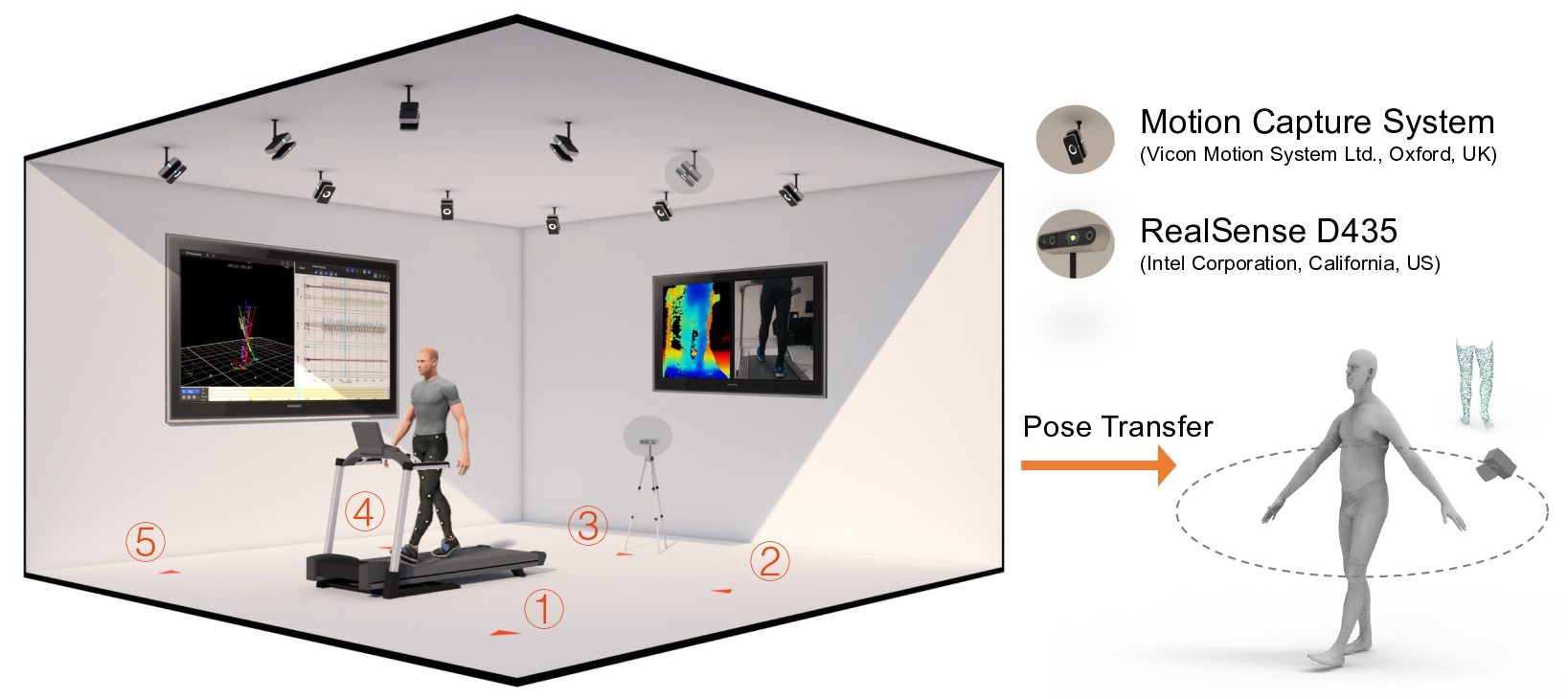

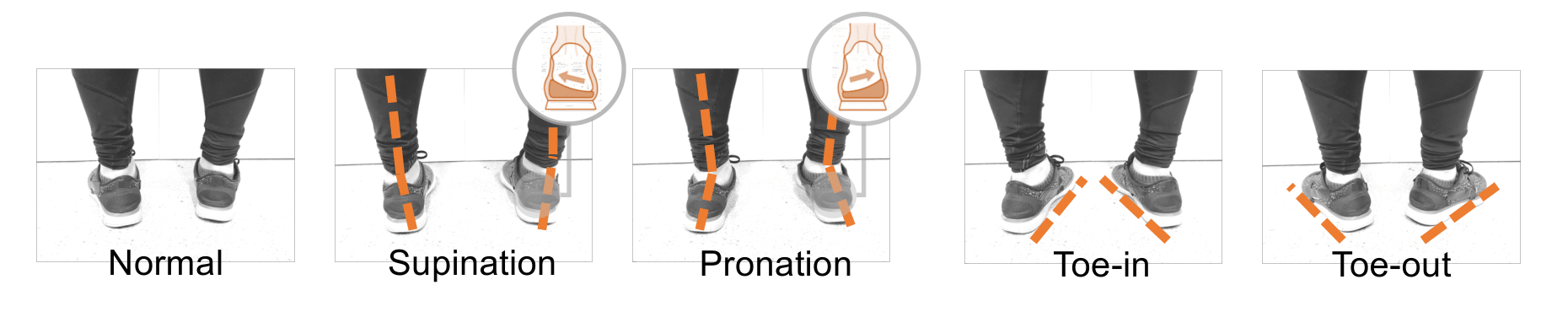

This is a dataset collected for Cross-Subject, Cross-View, Sim2Real, Cross-Modality gait pose estimation and abnormal gait pattern recognition.

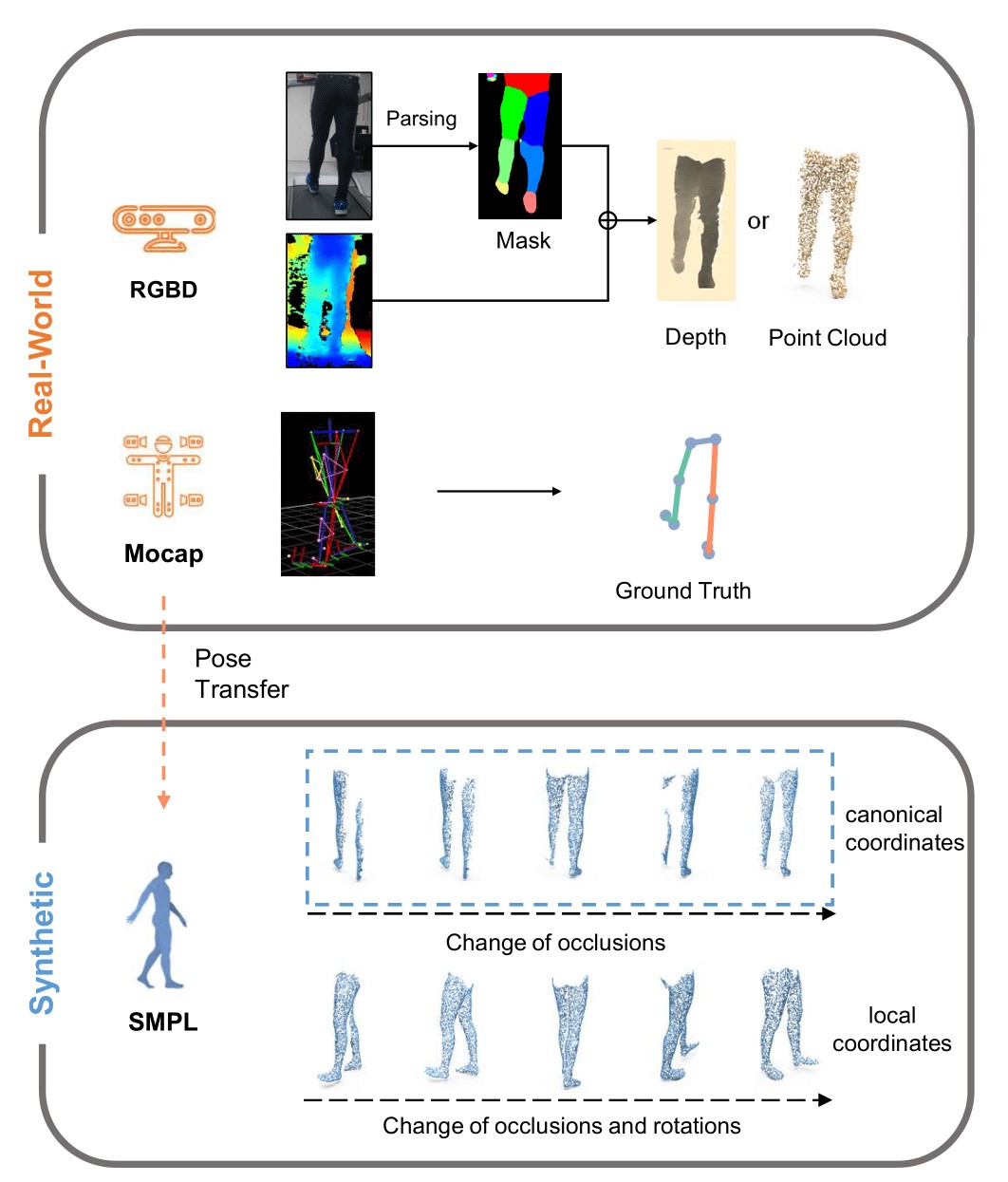

We provide data of multiple modalities, including Depth, Point Cloud, Kinematics, Segmentation Map and 2D Keypoints. Access to raw RGB data was temporally suspended for ethics issue.

Description

Real-World Dataset

Synthetic Data

Dataset

An example for visualization can be downloaded here. Please sign the form and you will be given the further instructions for downloading this dataset.

Supplementary Code

We provide the script for visualizing data from different modalities, generating synthetic data, as well as for experiment settings of benchmarking.

Citation

Please cite this work for the use of this dataset in your work.

Please also consider citing the following if you find this work helpful.

Acknowledgement

This project was approved by the College Ethics Committee of Imperial College London with the reference No. 18IC4915. We want to express our gratitude to the subjects participating in our study.

For any question regarding this dataset, please contact Xiao Gu (xiao.gu17@imperial.ac.uk).